Why Cheap IoT Breaks Trust: BSides Seattle 2026 hardware CTF deeper dive

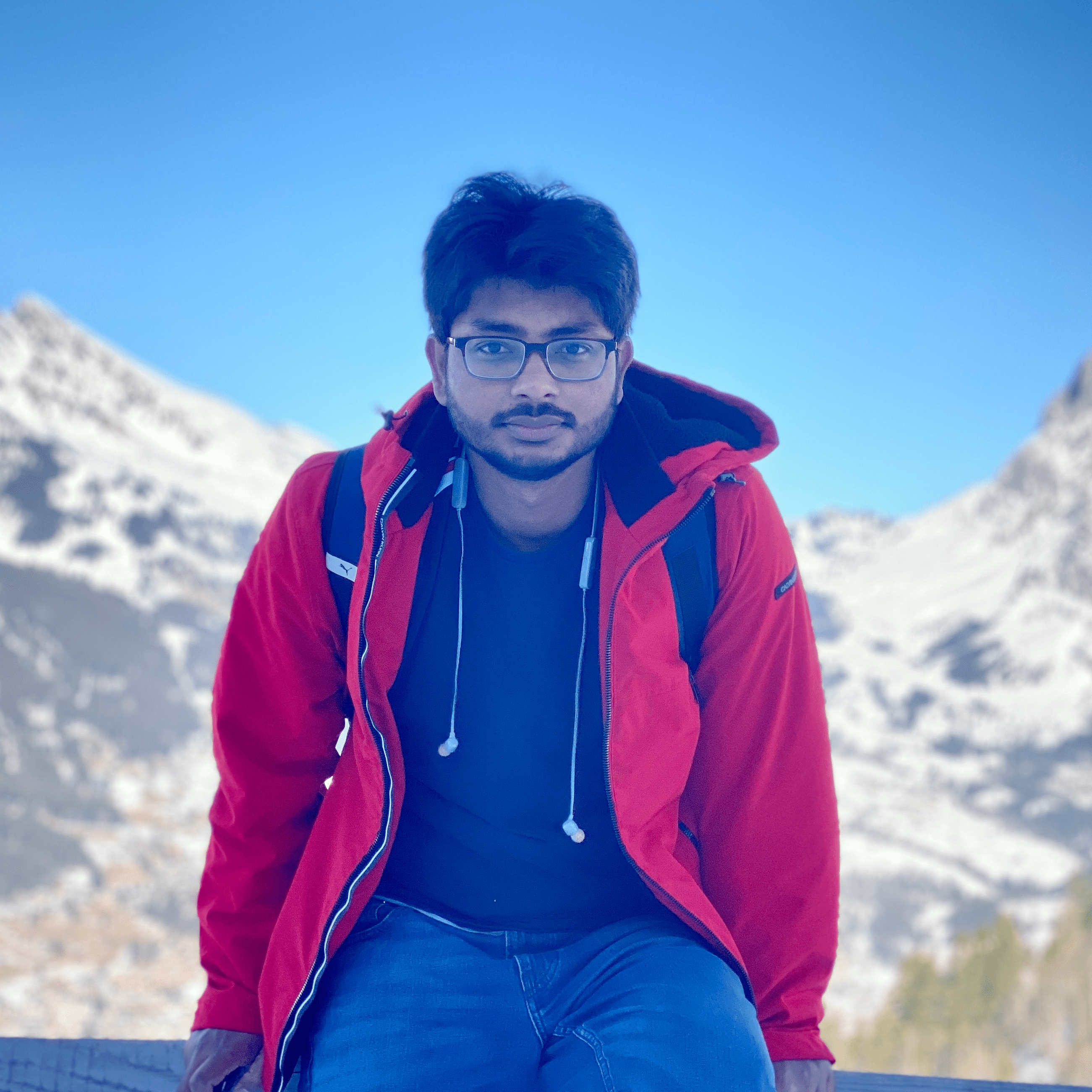

If you are reading this, you likely participated in the BSides Seattle 2026 Hardware Hacking Lab—a CTF-style hands-on exercise that I designed together with Vraj and Khyati. While the lab focused on practical interaction with a real embedded device, its purpose extended far beyond completing technical challenges or capturing flags. This article steps back from the hands-on experience to explain the larger security concepts you actually explored.

After completing the lab, it may seem that the primary accomplishment was gaining access to a router—connecting through UART, dumping flash memory, extracting a password from firmware, and modifying the device to boot your own changes. In reality, these actions collectively formed a practical evaluation of one of the most fundamental questions in system security:

Can a device prove that the software it executes is authentic?

The exercises were not merely about hacking a router. They were designed to expose how trust is established—or silently fails—inside modern embedded systems. What you performed was an empirical examination of execution authority, secret protection, and the presence (or absence) of a hardware root of trust.

Modern computing security ultimately depends on control over execution. Encryption, authentication, and access control mechanisms assume that trusted software is running on trusted hardware. If firmware itself can be replaced, higher-layer defenses lose meaning. The exercises performed during this lab systematically evaluated whether such architectural guarantees existed in a commodity embedded device.

Observing Trust Formation

The first interaction with the device occurred through the UART interface during system boot. At this stage, the processor initializes memory and prepares execution before any operating system protections are active. This phase determines what software the device ultimately trusts.

Secure systems treat early boot stages as critical security boundaries. Debug interfaces are typically restricted or permanently disabled in production devices because control during boot implies control over the entire platform. The unrestricted UART console revealed that this router exposes its trust establishment process without authentication, implicitly assuming physical access equals authorization.

Firmware Extraction and Hard-Coded Secrets

Dumping the flash memory allowed the firmware to be analyzed independently of the hardware. Within the binary image, operational configuration data—including the WiFi password—was discovered directly embedded in persistent storage. The credential was neither encrypted nor derived dynamically; it existed as static data surrounded by erased flash regions.

Hard-coded passwords represent more than poor implementation practice. They indicate the absence of hardware-backed secret protection. Secure embedded platforms typically derive encryption keys from immutable hardware sources such as OTP memory or secure elements, ensuring that extracted firmware alone cannot reveal usable secrets. In this device, possession of firmware directly resulted in credential recovery, demonstrating that confidentiality relied entirely on obscurity.

Modifying Execution and Secure Boot

The defining experiment involved modifying the firmware image and reflashing the device. Although the modification altered only a visible boot message, the system accepted and executed the modified firmware without resistance. No cryptographic signature verification rejected the change, and no integrity mechanism halted execution.

This outcome demonstrates the absence of secure boot. In secure architectures, execution follows a cryptographic chain of trust beginning from immutable boot ROM code:

Boot ROM → Bootloader → Kernel → Operating System

Each stage verifies the authenticity of the next before execution proceeds. The successful boot of modified firmware shows that this chain is either missing or unenforced. The router cannot distinguish legitimate firmware from attacker-controlled firmware, meaning execution authority belongs to whoever writes the flash memory last.

The Missing Hardware Root of Trust

A hardware root of trust provides an immutable foundation from which software authenticity and device identity are derived. Without such a root, software effectively validates itself, creating a circular trust dependency vulnerable to physical modification.

The exercises demonstrated that firmware integrity in this platform depends entirely on writable storage rather than silicon-enforced verification. As a result, both credential extraction and persistent firmware modification were possible without exploiting software vulnerabilities.

Supply Chain Implications

The implications extend beyond laboratory experimentation. Consumer networking devices frequently pass through complex manufacturing, refurbishment, and resale ecosystems. Devices lacking verified boot can be modified anywhere along this supply chain, allowing malicious firmware to be installed prior to purchase.

Because the device performs no cryptographic verification during boot, end users have no reliable method to confirm firmware authenticity. A compromised router may operate normally while silently intercepting traffic or harvesting credentials, transforming inexpensive IoT hardware into persistent attack infrastructure.

Why Modern Platforms Resist These Attacks

Modern secure systems—including smartphones and cloud infrastructure platforms—implement hardware-enforced trust mechanisms such as immutable boot verification, device-unique cryptographic identities, hardware-derived storage encryption, and measured boot attestation.

Under such architectures, firmware modification causes boot failure rather than execution, and extracted firmware does not expose usable secrets. The difference between these systems and the evaluated router is architectural rather than incremental: security begins in silicon.

What You Actually Accomplished

The purpose of the exercise was not simply to teach firmware dumping or binary patching. Each step evaluated a foundational security property. UART access exposed early execution trust boundaries. Flash extraction evaluated confidentiality assumptions. Hard-coded credentials revealed failures in secret management. Firmware patching tested whether execution authenticity was enforced.

Together, these actions formed a complete trust audit of an embedded system. Rather than exploiting bugs, participants evaluated whether the platform deserved trust at all.

Looking Forward

Understanding why this device failed provides motivation to study modern secure architectures designed to prevent exactly these outcomes. Concepts such as hardware roots of trust, secure boot, device identity derivation, measured boot, and frameworks like DICE aim to ensure that systems remain trustworthy even under physical access.

You did not merely break a router. You learned how trust fails—and why building trustworthy systems requires security anchored in hardware rather than assumptions.